Teaching AI Intuition: Iterative Development of a Foundational Course Format

Iterative Development of a Foundational Course Format for AI Education in Design

How do you teach designers to work meaningfully with AI — without training them to become developers, and without reducing the technology to a set of new tools in the creative toolkit? This question has driven the development of a foundational course in AI and Design, running since the summer semester of 2023 within the fourth semester of the Interaction Design programme at HfG Schwäbisch Gmünd.

The course makes the technical complexity of AI accessible to design students through a deliberate combination of theoretical input and hands-on experimentation. Its overarching goal is the development of AI Intuition [1], a grounded understanding of how AI systems work, what they can and cannot do, and how to engage with them critically and purposefully as a design material. This intuition equips students to develop a precise vocabulary for AI concepts, make informed decisions about when and how to use these technologies, and reflect critically on their societal, ethical, and ecological implications.

Over three years and six iterations, the course has been continuously reshaped in response to student feedback, technological change, and our own evolving understanding of what design education at the intersection of AI actually requires.

Course Modules

The course is built around a set of foundational modules, each addressing a different dimension of AI that is relevant to design practice. Together, they form a deliberate progression in terms of both content and complexity: from understanding the underlying functional principles of neural networks, to prototyping, to designing interfaces and interactions with and for AI, to working with generative AI technologies that increasingly shape creative and communicative processes.

Module 1: AI Fundamentals for Designers

A broad understanding of how AI technologies work is a prerequisite for using them thoughtfully as tool and as design material. The first module provides an accessible entry point into the landscape of AI technologies relevant to design practice, covering image classification, object detection, and segmentation, as well as text and image generation, speech synthesis, and sensor data analysis for interactive applications.

This overview is followed by an introduction to the principles of neural networks. To make these abstract concepts tangible, students work with SandwichNet, an interactive tool developed at the AI+Design Lab that visualises how neural networks learn by training a model to distinguish between edible and inedible sandwiches. By manipulating parameters and observing the model’s behaviour directly, students develop an intuitive feel for concepts that would otherwise remain purely theoretical.

The module also lays the foundation for an overarching mindset that we aim to promote throughout the course: AI is reshaping design practice in fundamental ways, changing not only the tools designers use, but the nature of the systems they design, the processes they follow, and the questions they need to ask. As technology shifts from passive tool to active counterpart, entirely new design challenges emerge around collaboration, autonomy, trust and ethics. This makes it all the more important that designers take an active and critical role in shaping future human-AI systems, moving well beyond the mere application of tools.

Module 2: Prototyping AI-Based Interactions

The second module makes the abstract principles introduced in Module 1 physically tangible through what we call Physical AI [2]: students work with low-cost microcontrollers (Arduino Nano 33 BLE Sense) and sensor data to build their own machine learning models, training them to recognise gestures and movement patterns in real time.

Students work through the complete ML development cycle, from collecting and labelling their own sensor data, to training a model, to deploying it on hardware that interacts with the physical world. For the technical implementation, they use Edge Impulse, an online platform that streamlines the entire workflow without requiring extensive programming knowledge. The use of affordable, accessible hardware and self-generated datasets keeps the models small and their behaviour legible, making the underlying mechanics of machine learning more directly observable.

Beyond the technical fundamentals, the module opens up a design space: by working with sensors that detect subtle movements and complex motion patterns, students begin to explore interaction forms that go well beyond conventional input methods and to ask what new possibilities these might create for human-machine communication.

Module 3: Designing Interfaces and Interactions with and for AI

AI-based systems challenge many of the assumptions that have long underpinned interaction design. Unlike traditional software, they do not follow fixed, predictable rules. Their outputs vary, their behaviour is difficult to predict, and their capabilities are harder to communicate to users. This raises urgent questions around trust, transparency, and expectation management. Students analyse Google’s PAIR Guidelines alongside Nielsen’s and Norman’s ten usability heuristics, asking which classical UX principles still hold, where AI introduces entirely new challenges, and how established methods need to be adapted for intelligent systems.

At the same time, AI opens up new design possibilities for personalisation, adaptive interfaces, and entirely new forms of human-machine interaction. One particularly rich and complex dimension concerns the relationships that form between users and AI systems, whether intentionally designed or not. This raises fundamental questions around animism, anthropomorphism, and the ethical implications of designing systems that evoke emotional responses. Students explore both the potential and the risks of these approaches, including the concept of “Otherware” by Hassenzahl and colleagues [3], which proposes alternative design paradigms beyond anthropomorphic patterns.

Module 4: How to Talk to Transformers — An Introduction to Language Models

Transformer-based language models have become central to a growing range of creative, communicative, and design processes. Yet for most designers, they remain opaque: powerful tools whose outputs can be steered through prompting, but whose underlying logic stays hidden. This module builds a precise enough understanding of how these models work to use them deliberately and critically, grounded in technical concepts, but oriented towards design practice.

Students learn how language is broken down into tokens, how these are organised as vectors in an embedding space, and how meaning emerges through self-attention and feed-forward networks. Building on this foundation, students develop a strategic prompting competency: learning to navigate the embedding space through precise word choices, to use examples rather than abstract descriptions, and to control parameters such as temperature to balance creativity and precision.

The module combines analytical frameworks, hands-on prompting experiments, and embodied exercises such as spatially mapping semantic relationships, to make the relational, context-dependent nature of transformer-generated meaning tangible. As a concluding exercise, students design their own transformer persona: a coherent AI character defined through a system prompt, tone of voice, example interactions, and temperature settings. This task makes immediately visible how technical parameters, linguistic patterns, and design decisions combine to shape AI behaviour.

Module 5: Image Generation

Generative image tools have rapidly become part of everyday creative practice, making it essential for designers to move beyond the belief that image generation is just about finding the perfect prompt. The module combines structured theoretical input with hands-on exercises, including prompt challenges in small groups, to build both technical literacy and practical confidence.

The theoretical foundation focuses on diffusion models, the architecture behind most widely used image generation systems today, including proprietary tools such as DALL-E, Midjourney, and ChatGPT’s image generation, as well as openly available models like Stable Diffusion and Flux. Students learn how these models are trained, how they generate images from a learned representation of a training dataset, and key concepts such as denoising and CLIP embeddings. Equally important is understanding the differences between proprietary and open models in terms of accessibility, transparency, and customisability, as these have direct implications for reflective creative practice. The course consistently encourages the use of open models for their flexibility and adaptability.

The practical core of the module is a series of Prompt Challenges, in which students are asked to reproduce a given target image using Stable Diffusion. Through the process of trying to match a specific visual outcome, students encounter the gap between intention and result and incrementally learn different image generation techniques, including text-to-image and image-to-image generation, prompt design, and more advanced methods such as using ControlNet, reference images, and inpainting. Given the pace of development in this field, the module evolved considerably across semesters, reflected in our use of different open-source interfaces for Stable Diffusion, from Automatic1111 and ComfyUI to Invoke AI.

The module closes with a critical reflection on AI-generated imagery: students are encouraged to read generated images not as neutral outputs, but as invocations of training data and the human decisions that shaped it, engaging with questions of bias, characteristic aesthetics, and the cultural implications of these systems. Drawing on the Better Images of AI initiative, they are challenged to produce work that moves beyond the visual stereotypes that AI image generation tends to reproduce.

As the field evolved and our own understanding of what designers need deepened, two further modules were added to the course.

Module 6: Introduction to Agentic Design

Agentic AI — systems that can plan and execute tasks autonomously — represents one of the most significant recent developments in the field. Designing autonomous systems requires a fundamentally different mindset: no longer just shaping interfaces, but defining behaviour, boundaries, and decision-making processes — and asking when autonomy is actually the right choice.

Students learn to distinguish between defined workflows and autonomous agents: while workflows orchestrate processes through fixed sequences, agents dynamically steer their own processes and make independent decisions about tool use and execution strategy. To understand the underlying architecture, students explore the four core components of an agent: the large language model as central intelligence, the system prompt as its operating instruction, the memory component for short and long-term context, and tools for interaction with external systems such as APIs or databases.

For practical implementation, students work with n8n, a visual automation platform that enables rapid prototyping as well as more complex implementations. As a hands-on exercise, they build an agent configured as a personal creative sparring partner, developing an understanding of when agents are genuinely useful and when the reliability of a defined workflow is the better choice.

Module 7: Playful Ideation with Generative AI

Unlike the other modules, Playful Ideation is less concerned with technical understanding and more with creative practice and attitude. It responds to two problems that emerged from working with generative AI in design contexts. First, creative work with generative AI tends to be highly cognitive and outcome-focused, driven primarily by text-based prompting. Yet creative processes thrive on messiness, iteration, and creative friction. The module offers students concrete tactics to reintroduce these qualities into their work with AI, making co-creation less cognitive and more intuitive and action-based. Second, AI-generated content tends to look flat, glossy, and increasingly similar — a uniformity that reduces diversity and, with it, the conditions for creative thinking, ideation and innovation. Ambivalence and surprise, however, are crucial for fostering creativity and imagination. This module explores strategies to promote surprise, unexpected results and to encourage “otherness”.

The technical setup of the module builds on Transferscope, a tool that combines image generation with ControlNet to enable a deceptively simple but powerful interaction: with a single button, users can capture the visual style of any object or concept and transfer it onto another object or scene. This one-button interface deliberately removes the complexity of text-based prompting, encouraging a more playful, intuitive, and exploratory engagement with generative AI.

Connecting the Modules: A Hands-On Assignment

One recurring challenge in earlier iterations of the course was that the modules felt isolated from one another — students engaged with each topic individually, but the connections between them were not always visible. In response, the two most recent iterations introduced a hands-on assignment designed to tie the modules together by applying them into a final project.

Real-world AI systems rarely rely on a single technology or modality, but on a combination of more of them. Language models are combined with image generation, physical sensors feed into agentic workflows, and multimodal interactions draw on several of these layers at once. The assignment is designed to make this visible, giving students the experience of combining the tools and concepts from across the week into a coherent, functioning system. Beyond the technical integration, the assignment also marks a first step towards conceptual engagement and use case development, inviting students to explore interesting scenarios and applications for AI systems in a playful, low-stakes way.

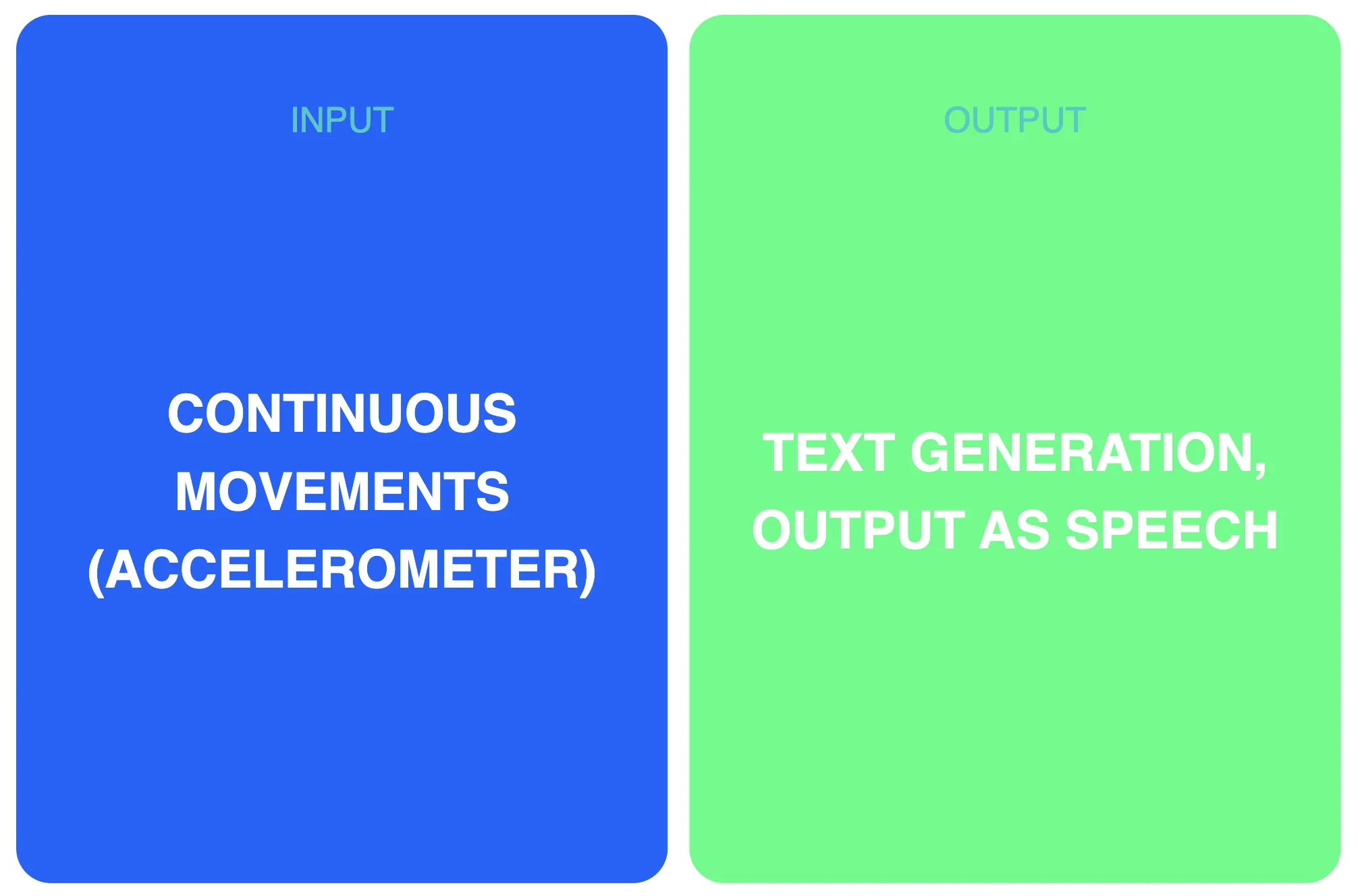

Two different assignment formats were developed and tested across iterations. The first uses Input/Output Cards as a starting point to explore combinations of input and output modalities across different AI types.

Example of combinations of the Input/Output Cards for concept generation

Example of combinations of the Input/Output Cards for concept generation

Students are given different combinations of input/output cards at random and are encouraged to ideate concepts that make sense of them. The use of these constraints aims to encourage students to think beyond familiar concepts and outside the box, building an understanding of how different input/output modalities can be orchestrated into coherent interaction concepts in a playful, low-threshold way.

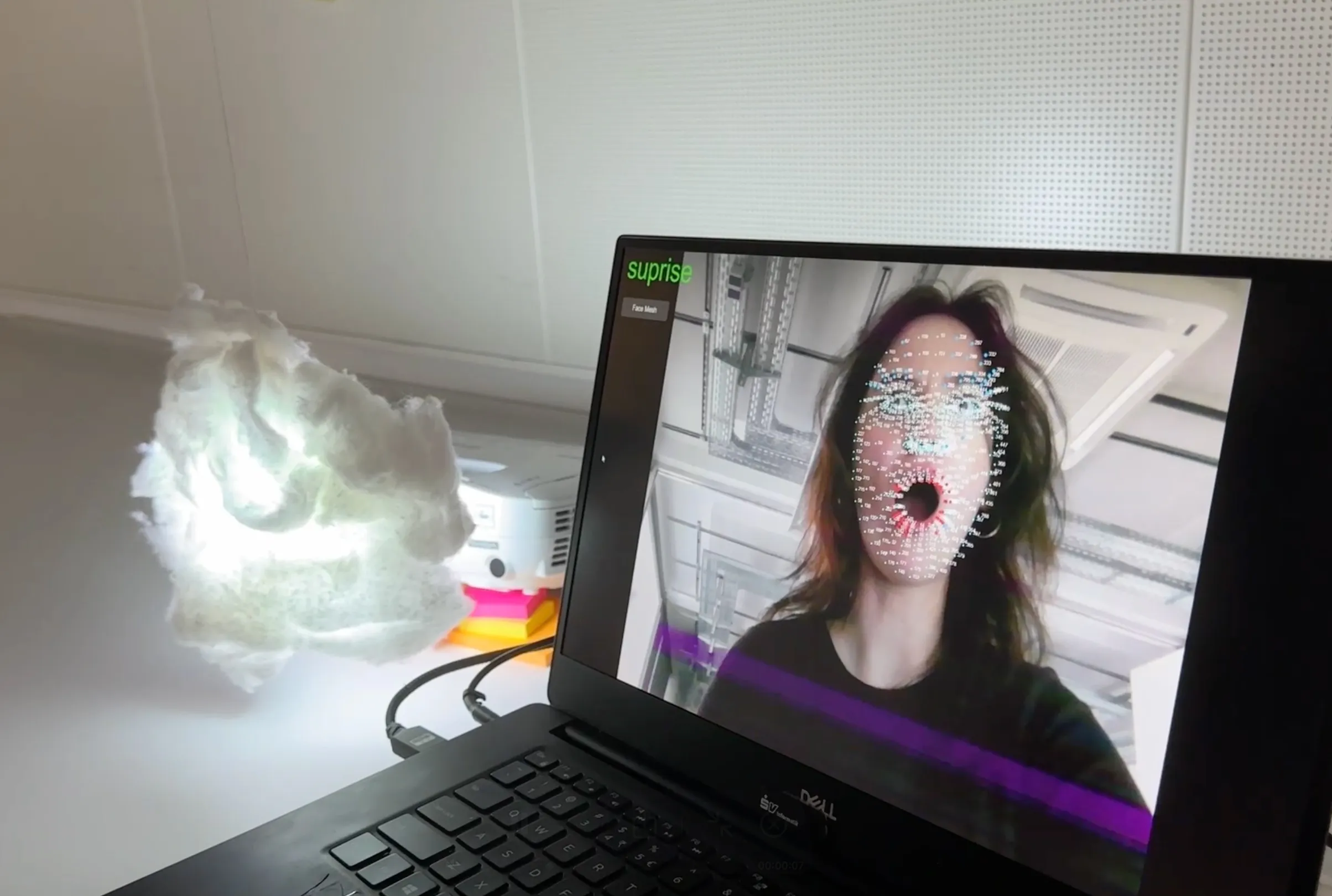

A concept created with the Input/Output Cards: Gloomy, a lamp which based on facial expressions (input) provides visual and auditory feedback (output), by Rebeka Tot and Vivien Cai.

A concept created with the Input/Output Cards: Gloomy, a lamp which based on facial expressions (input) provides visual and auditory feedback (output), by Rebeka Tot and Vivien Cai.

A concept created with the Input/Output Cards: Gloomy, a lamp which based on facial expressions (input) provides visual and auditory feedback (output), by Rebeka Tot and Vivien Cai.

A concept created with the Input/Output Cards: Gloomy, a lamp which based on facial expressions (input) provides visual and auditory feedback (output), by Rebeka Tot and Vivien Cai.

The second assignment is a more technically integrated pipeline that connects sensor-based machine learning, language models, and image generation into a single agentic workflow. A physical sensor captures events from the environment and sends them to an n8n workflow, where a storytelling agent generates a narrative. At key moments in the story, the agent produces images to accompany the text, maintaining visual consistency through image-to-image generation. Students configure the entire pipeline themselves, making design decisions at every level: from the sensor interaction and the agent’s narrative style, to the visual aesthetic of the generated images.

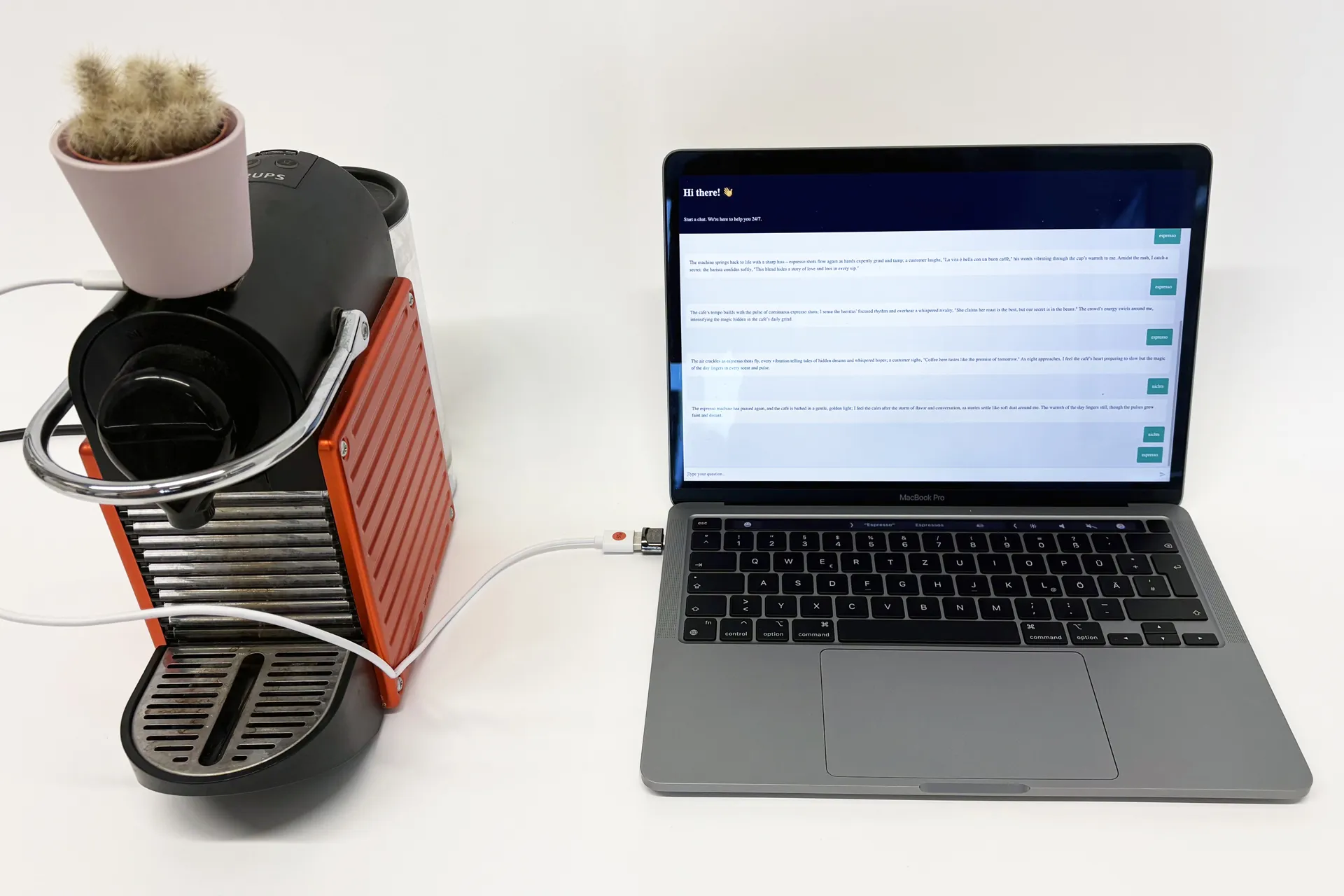

The project by Trang-Anh Nguyen and Emily Ulrich tells stories of everyday life in a café from the perspective of a plant. It perceives its surroundings through the vibrations of the machine and interprets the events happening around it.

The project by Trang-Anh Nguyen and Emily Ulrich tells stories of everyday life in a café from the perspective of a plant. It perceives its surroundings through the vibrations of the machine and interprets the events happening around it.

The project by Trang-Anh Nguyen and Emily Ulrich tells stories of everyday life in a café from the perspective of a plant. It perceives its surroundings through the vibrations of the machine and interprets the events happening around it.

The project by Trang-Anh Nguyen and Emily Ulrich tells stories of everyday life in a café from the perspective of a plant. It perceives its surroundings through the vibrations of the machine and interprets the events happening around it.

Cross-Cutting Elements

Alongside the individual modules, some recurring elements run through the entire course and were introduced in response to specific challenges that emerged across iterations.

The first concerns vocabulary. We noticed early on that key concepts introduced in one module were not being carried forward into the next, and the shared language needed for that was not sticking. In response, we introduced a daily practice of collecting the most important terms from each module on a large poster, displayed throughout the week. This made concepts visually accessible and created a growing reference point that could be explicitly linked to each new day’s content.

The second concerns critical reflection. The constant emergence of new AI tools and capabilities tends to generate enthusiasm among students, which is valuable, but needs to be balanced by a critical perspective. After each module, we introduced a structured discussion asking what ethical, societal, or ecological implications the day’s content might carry, and how designers can engage with these responsibly. This was not treated as a separate topic, but as an integral part of each module.

In early iterations, we developed a self-study course — the AI Primer — designed to be completed before the block week. It covered foundational concepts, societal implications, and introduced key vocabulary through videos and reading materials. In practice, however, we found that a front-loaded, self-study format was not the most effective way to build this foundation: the content was difficult to discuss and contextualise in isolation, and technical explanations were harder to absorb without hands-on experience to anchor them. Over time, we moved these elements into the course itself — distributing vocabulary, conceptual framing, and critical reflection across the modules, where they could be directly connected to the content of each day. The vocabulary posters and the daily reflection discussions are both expressions of this shift.

Reflections and Learnings

Course Format

Intensive block format proved effective. The course was initially offered in a distributed format, with sessions spread across several weeks. Moving to an intensive one-week block allowed students to devote their full attention to the complex and demanding subject area. Full days rather than isolated few-hour slots allowed concepts to accumulate and connect, creating the conditions for a deeper and more sustained engagement with the material.

Earlier curriculum placement would be preferable. The course has been offered in the fourth semester, and while this has worked well, we would advocate for an earlier placement. There is a genuine tension here: introducing AI tools too early risks shortcutting foundational skills. Evaluating the quality of AI output requires knowing how to do things yourself. At the same time, students are using these technologies regardless, often without a critical or reflective framework. Teaching that framework early, in parallel with foundational skills, seems increasingly important.

Content and Development

Foundational modules are robust, but require ongoing updates. The core structure has remained stable across iterations, and the modules build well on each other. Nonetheless, the specific content needs to be revisited regularly to keep pace with developments in the field.

New topics must be scanned and selectively integrated. The field moves fast, and new themes regularly emerge that are relevant for design education. Integrating them requires making deliberate choices about shifting focus and removing content — the scope of the course is finite.

Teach the principles, not the tools. The tool landscape in AI changes rapidly, and what is standard practice today may be obsolete within a semester. Rather than building the course around specific tools, we focused on teaching the underlying principles and functional logic that transfers across them. Tools were used as vehicles for learning, not as ends in themselves. The goal was to equip students with the conceptual foundation to independently adopt and critically evaluate new tools as they emerge — as illustrated by our own repeated shifts in the image generation module, from Automatic1111 to ComfyUI to Invoke AI.

Pedagogical Approach

Teach co-creation, not automation. Working with AI in creative practice raises fundamental questions about the role of designers and the nature of the design process itself. We consistently encouraged students to engage with AI as a creative counterpart rather than a shortcut — exploring different modes of collaboration, questioning when automation is appropriate, and developing a conscious, reflective stance towards the tools they use. Developing a reflective design practice with AI is, we believe, as important as technical literacy.

The framing of AI must connect to students’ own practice. Abstract introductions to AI rarely resonate in a design context. What makes the subject tangible is connecting it directly to students’ existing design practice and motivations — a framing that will inevitably vary depending on the institutional culture and programme.

Hands-on exploration should connect across modules — and towards application. From the beginning, hands-on exploration was central to the course. Over time, however, we recognised that keeping it within individual module silos was not enough — students needed the experience of combining technologies and concepts across modules. The connecting assignment bridges this gap, and in doing so also marks a first step towards conceptual engagement and the development of meaningful AI use cases.

Critical reflection is not a separate topic — it is part of every topic. Ethical, societal, and ecological questions do not sit outside the technical content of the course; they arise directly from it. By embedding reflection into each module, we aimed to make it an instinctive part of how students engage with AI — not something they do afterwards, but something they do throughout.

Conclusion

The course Foundations of AI + Design has evolved considerably since its first iteration in 2023, through ongoing reflection, experimentation, and response to a field that continues to change at a remarkable pace. What has remained stable throughout is the underlying goal: to develop AI Intuition in design students, a broad understanding of how AI technologies work, what they can and cannot do, and how to engage with them critically and purposefully as a design material.

The modular structure, the intensive block format, and the cross-cutting elements of vocabulary-building and critical reflection have proven to be a robust foundation. At the same time, the course has had to remain open to change, adding new modules, refining approaches, and continuously asking what designers actually need to engage meaningfully with AI.

We share it in the spirit of the course itself: as a work in progress, open to critique, iteration, and further development.

Acknowledgements

The following current and former members of the AI+Design Lab were involved in the development and implementation of the course format and the modules described: Rahel Flechtner, Jordi Tost, Felix Sewing, Christopher Pietsch, Ron Mandic, Moritz Hartstang, Aeneas Stankowski, and Alexa Steinbrück.

References:

[1] Flechtner, R., & Stankowski, A. (2023). AI Is Not a Wildcard: Challenges for Integrating AI into the Design Curriculum. Proceedings of the 5th Annual Symposium on HCI Education, 72–77. https://doi.org/10.1145/3587399.3587410 [2] Rahel Flechtner, Jakob Kilian, Ivan Iovine. 2025. Physical AI: Sensor-Based AI in Art and Design. In: Florian Jenett, Rahel Flechtner, and Simon Maris (Eds.). 2025. un/learn ai : navigating AI in aesthetic practices. Hochschule Mainz. https://doi.org/10.25358/openscience-11811 [3] Marc Hassenzahl, Jan Borchers, Susanne Boll, Astrid Rosenthal-von der Pütten, and Volker Wulf. 2021. Otherware: how to best interact with autonomous systems. Interactions 28, 1: 54–57. https://doi.org/10.1145/3436942